Complexity

In general usage, complexity tends to be used to characterize something with many parts in intricate arrangement. The study of these complex linkages is the main goal of network science. In science there are at this time a number of approaches to characterizing complexity, many of which are reflected in this article.

Definitions are often tied to the concept of a ‘system’ – a set of parts or elements which have relationships among them differentiated from relationships with other elements outside the relational regime. Many definitions tend to postulate or assume that complexity expresses a condition of numerous elements in a system and numerous forms of relationships among the elements. At the same time, what is complex and what is simple is relative and changes with time.

Some definitions key on the question of the probability of encountering a given condition of a system once characteristics of the system are specified. Warren Weaver has posited that the complexity of a particular system is the degree of difficulty in predicting the properties of the system if the properties of the system’s parts are given. In Weaver's view, complexity comes in two forms: disorganized complexity, and organized complexity. [1] Weaver’s paper has influenced contemporary thinking about complexity. [2]

The approaches which embody concepts of systems, multiple elements, multiple relational regimes, and state spaces might be summarized as implying that complexity arises from the number of distinguishable relational regimes (and their associated state spaces) in a defined system. Some definitions relate to the algorithmic basis for the expression of a complex phenomenon or model or [[mathematical modelling|mathematical expression, as is later set out herein.

Disorganized complexity vs. organized complexity

One of the problems in addressing complexity issues has been distinguishing conceptually between the large number of variances in relationships extant in random collections, and the sometimes large, but smaller, number of relationships between elements in systems where constraints (related to correlation of otherwise independent elements) simultaneously reduce the variations from element independence and create distinguishable regimes of more-uniform, or correlated, relationships, or interactions.

Weaver perceived and addressed this problem, in at least a preliminary way, in drawing a distinction between 'disorganized complexity' and 'organized complexity'.

In Weaver's view, disorganized complexity results from the particular system having a very large number of parts, say millions of parts, or many more. Though the interactions of the parts in a 'disorganized complexity' situation can be seen as largely random, the properties of the system as a whole can be understood by using probability and statistical methods.

A prime example of disorganized complexity is a gas in a container, with the gas molecules as the parts. Some would suggest that a system of disorganized complexity may be compared, for example, with the (relative) simplicity of the planetary orbits – the latter can be known by applying Newton’s laws of motion, though this example involved highly correlated events.

Organized complexity, in Weaver's view, resides in nothing else than the non-random, or correlated, interaction between the parts. These non-random, or correlated, relationships create a differentiated structure which can, as a system, interact with other systems. The coordinated system manifests properties not carried by, or dictated by, individual parts. The organized aspect of this form of complexity vis a vis other systems than the subject system can be said to "emerge," without any “guiding hand.”

The number of parts does not have to be very large for a particular system to have emergent properties. A system of organized complexity may be understood in its properties (behavior among the properties) through modeling and simulation, particularly modeling and simulation with computers. An example of organized complexity is a city neighborhood as a living mechanism, with the neighborhood people among the system’s parts. [3]

Sources and factors of complexity

The source of disorganized complexity is the large number of parts in the system of interest, and the lack of correlation between elements in the system.

There is no consensus at present on general rules regarding the sources of organized complexity, though the lack of randomness implies correlations between elements. See e.g. Robert Ulanowicz's treatment of ecosystems. [4] Consistent with prior statements here, the number of parts (and types of parts) in the system and the number of relations between the parts would have to be non-trivial – however, there is no general rule to separate “trivial” from “non-trivial.

Complexity of an object or system is a relative property. For instance, for many functions (problems), such a computational complexity as time of computation is smaller when multitape Turing machines are used than when Turing machines with one tape are used. Random Access Machines allow one to even more decrease time complexity (Greenlaw and Hoover 1998: 226), while inductive Turing machines can decrease even the complexity class of a function, language or set (Burgin 2005). This shows that tools of activity can be an important factor of complexity.

Specific meanings of complexity

In several scientific fields, "complexity" has a specific meaning :

- In computational complexity theory, the amounts of resources required for the execution of algorithms is studied. The most popular types of computational complexity are the time complexity of a problem equal to the number of steps that it takes to solve an instance of the problem as a function of the size of the input (usually measured in bits), using the most efficient algorithm, and the space complexity of a problem equal to the volume of the memory used by the algorithm (e.g., cells of the tape) that it takes to solve an instance of the problem as a function of the size of the input (usually measured in bits), using the most efficient algorithm. This allows to classify computational problems by complexity class (such as P, NP ... ). An axiomatic approach to computational complexity was developed by Manuel Blum. It allows one to deduce many properties of concrete computational complexity measures, such as time complexity or space complexity, from properties of axiomatically defined measures.

- In algorithmic information theory, the Kolmogorov complexity (also called descriptive complexity, algorithmic complexity or algorithmic entropy) of a string is the length of the shortest binary program which outputs that string. Different kinds of Kolmogorov complexity are studied: the uniform complexity, prefix complexity, monotone complexity, time-bounded Kolmogorov complexity, and space-bounded Kolmogorov complexity. An axiomatic approach to Kolmogorov complexity based on Blum axioms (Blum 1967) was introduced by Mark Burgin in the paper presented for publication by Andrey Kolmogorov (Burgin 1982). The axiomatic approach encompasses other approaches to Kolmogorov complexity. It is possible to treat different kinds of Kolmogorov complexity as particular cases of axiomatically defined generalized Kolmogorov complexity. Instead, of proving similar theorems, such as the basic invariance theorem, for each particular measure, it is possible to easily deduce all such results from one corresponding theorem proved in the axiomatic setting. This is a general advantage of the axiomatic approach in mathematics. The axiomatic approach to Kolmogorov complexity was further developed in the book (Burgin 2005) and applied to software metrics (Burgin and Debnath, 2003; Debnath and Burgin, 2003).

- In information processing, complexity is a measure of the total number of properties transmitted by an object and detected by an observer. Such a collection of properties is often referred to as a state.

- In physical systems, complexity is a measure of the probability of the state vector of the system. This should not be confused with entropy; it is a distinct mathematical measure, one in which two distinct states are never conflated and considered equal, as is done for the notion of entropy statistical mechanics.

- In mathematics, Krohn-Rhodes complexity is an important topic in the study of finite semigroups and automata.

There are different specific forms of complexity:

- In the sense of how complicated a problem is from the perspective of the person trying to solve it, limits of complexity are measured using a term from cognitive psychology, namely the hrair limit.

- Unruly complexity denotes situations that do not have clearly defined boundaries, coherent internal dynamics, or simply mediated relations with their external context, as coined by Peter Taylor.

- Complex adaptive system denotes systems which have some or all of the following attributes [5]

- The number of parts (and types of parts) in the system and the number of relations between the parts is non-trivial – however, there is no general rule to separate “trivial” from “non-trivial;”

- The system has memory or includes feedback;

- The system can adapt itself according to its history or feedback;

- The relations between the system and its environment are non-trivial or non-linear;

- The system can be influenced by, or can adapt itself to, its environment; and

- The system is highly sensitive to initial conditions.

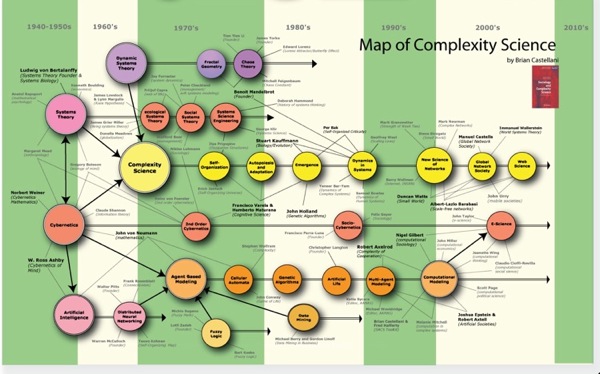

Study of complexity

Complexity has always been a part of our environment, and therefore many scientific fields have dealt with complex systems and phenomena. Indeed, some would say that only what is somehow complex – what displays variation without being random – is worthy of interest.

The use of the term complex is often confused with the term complicated. In today’s systems, this is the difference between myriad connecting "stovepipes" and effective "integrated" solutions. [6] This means that complex is the opposite of independent, while complicated is the opposite of simple.

While this has led some fields to come up with specific definitions of complexity, there is a more recent movement to regroup observations from different fields to study complexity in itself, whether it appears in anthills, human brains, or stock markets. One such interndisciplinary group of fields is relational order theories.

Complexity topics

Complex behaviour

The behaviour of a complex system is often said to be due to emergence and self-organization. Chaos theory has investigated the sensitivity of systems to variations in initial conditions as one cause of complex behaviour.

Complex mechanisms

Recent developments around artificial life, evolutionary computation and genetic algorithms have led to an increasing emphasis on complexity and complex adaptive systems.

Complex simulations

In social science, the study on the emergence of macro-properties from the micro-properties, also known as macro-micro view in sociology. The topic is commonly recognized as social complexity that is often related to the use of computer simulation in social science, i.e.: computational sociology.

Complex systems

Systems theory has long been concerned with the study of complex systems (In recent times, complexity theory and complex systems have also been used as names of the field). These systems can be biological, economic, technological, etc. Recently, complexity is a natural domain of interest of the real world socio-cognitive systems and emerging systemics research. Complex systems tend to be high-dimensional, non-linear and hard to model. In specific circumstances they may exhibit low dimensional behaviour.

Complexity in data

In information theory, algorithmic information theory is concerned with the complexity of strings of data.

Complex strings are harder to compress. While intuition tells us that this may depend on the codec used to compress a string (a codec could be theoretically created in any arbitrary language, including one in which the very small command "X" could cause the computer to output a very complicated string like '18995316'"), any two Turing-complete languages can be implemented in each other, meaning that the length of two encodings in different languages will vary by at most the length of the "translation" language - which will end up being negligible for sufficiently large data strings.

These algorithmic measures of complexity tend to assign high values to random noise. However, those studying complex systems would not consider randomness as complexity.

Information entropy is also sometimes used in information theory as indicative of complexity.

Applications of complexity

Computational complexity theory is the study of the complexity of problems - that is, the difficulty of solving them. Problems can be classified by complexity class according to the time it takes for an algorithm - usually a computer program - to solve them as a function of the problem size. Some problems are difficult to solve, while others are easy. For example, some difficult problems need algorithms that take an exponential amount of time in terms of the size of the problem to solve. Take the travelling salesman problem, for example. It can be solved in time O(n22n) (where n is the size of the network to visit - let's say the number of cities the travelling salesman must visit exactly once). As the size of the network of cities grows, the time needed to find the route grows (more than) exponentially.

Even though a problem may be computationally solvable in principle, in actual practice it may not be that simple. These problems might require large amounts of time or an inordinate amount of space. Computational complexity may be approached from many different aspects. Computational complexity can be investigated on the basis of time, memory or other resources used to solve the problem. Time and space are two of the most important and popular considerations when problems of complexity are analyzed.

There exist a certain class of problems that although they are solvable in principle they require so much time or space that it is not practical to attempt to solve them. These problems are called intractable.

There is another form of complexity called hierarchical complexity. It is orthogonal to the forms of complexity discussed so far, which are called horizontal complexity.

References

- Weaver, Warren (1948), "Science and Complexity", American Scientist 36: 536 (Retrieved on 2007–11–21.)

- Johnson, Steven (2001). Emergence: the connected lives of ants, brains, cities, and software. New York: Scribner. pp. p.46. ISBN 0-684-86875-X..

- Jacobs, Jane (1961). The Death and Life of Great American Cities. New York: Random House.

- Ulanowicz, Robert, "Ecology, the Ascendant Perspective", Columbia, 1997

- Johnson, Neil F. (2007). Two’s Company, Three is Complexity: A simple guide to the science of all sciences. Oxford: Oneworld. ISBN 978-1-85168-488-5.

- (Lissack and Roos, 2000)

Further reading

- Lewin, Roger (1992). Complexity: Life at the Edge of Chaos. New York: Macmillan Publishing Co. ISBN 9780025704855.

Waldrop, M. Mitchell (1992). Complexity: The Emerging Science at the Edge of Order and Chaos. New York: Simon & Schuster. ISBN 9780671767891.

- Czerwinski, Tom; David Alberts (1997). Complexity, Global Politics, and National Security. National Defense University. ISBN 9781579060466.

- Czerwinski, Tom (1998). Coping with the Bounds: Speculations on Nonlinearity in Military Affairs. CCRP. ISBN 9781414503158 (from Pavilion Press, 2004).

- Lissack, Michael R.; Johan Roos (2000). The Next Common Sense, The e-Manager’s Guide to Mastering Complexity. Intercultural Press. ISBN 9781857882353.

- Solé, R. V.; B. C. Goodwin (2002). Signs of Life: How Complexity Pervades Biology. Basic Books. ISBN 9780465019281.

- Moffat, James (2003). Complexity Theory and Network Centric Warfare. CCRP. ISBN 9781893723115.

- Smith, Edward (2006). Complexity, Networking, and Effects Based Approaches to Operations. CCRP. ISBN 9781893723184.

Heylighen, Francis (2008), "Complexity and Self-Organization", in Bates, Marcia J.; Maack, Mary Niles, Encyclopedia of Library and Information Sciences, CRC, ISBN 9780849397127

- Greenlaw, N. and Hoover, H.J. Fundamentals of the Theory of Computation, Morgan Kauffman Publishers, San Francisco, 1998

- Blum, M. (1967) On the Size of Machines, Information and Control, v. 11, pp. 257-265

- Burgin, M. (1982) Generalized Kolmogorov complexity and duality in theory of computations, Notices of the Russian Academy of Sciences, v.25, No. 3, pp.19-23

- Mark Burgin (2005), Super-recursive algorithms, Monographs in computer science, Springer.

- Burgin, M. and Debnath, N. Hardship of Program Utilization and User-Friendly Software, in Proceedings of the International Conference “Computer Applications in Industry and Engineering”, Las Vegas, Nevada, 2003, pp. 314-317

- Debnath, N.C. and Burgin, M., (2003) Software Metrics from the Algorithmic Perspective, in Proceedings of the ISCA 18th International Conference “Computers and their Applications”, Honolulu, Hawaii, pp. 279-282

- Meyers, R.A., (2009) "Encyclopedia of Complexity and Systems Science", ISBN 978-0-387-75888-6

- Caterina Liberati, J. Andrew Howe, Hamparsum Bozdogan, Data Adaptive Simultaneous Parameter and Kernel Selection in Kernel Discriminant Analysis Using Information Complexity, Journal of Pattern Recognition Research, JPRR, Vol 4, No 1, 2009.

External links

- Quantifying Complexity Theory - classification of complex systems

- Complexity Measures - an article about the abundance of not-that-useful complexity measures.

- UC Four Campus Complexity Videoconferences - Human Sciences and Complexity

- Complexity Digest - networking the complexity community

- The Santa Fe Institute - engages in research in complexity related topics